J. Wang, X. Lin, Y. Zuo, and J. Wu

ACM Transactions on Information Systems (TOIS), 2023

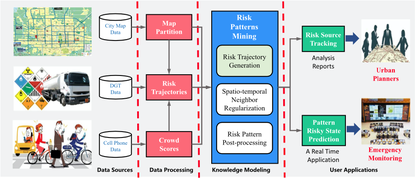

Recent years have witnessed the emergence of worldwide megalopolises and the accompanying public safety events, making urban safety a top priority in modern urban management. Among various threats, dangerous goods such as gas and hazardous chemicals transported through cities have bred repeated tragedies and become the deadly “bomb” we sleep with every day. While tremendous research efforts have been devoted to dealing with dangerous goods transportation (DGT) issues, further study is still in great need to quantify this problem and explore its intrinsic dynamics from a big data perspective. In this article, we present a novel system called DGeye, to feature a fusion between DGT trajectory data and residential population data for dangers perception and prediction. Specifically, DGeye first develops a probabilistic graphical model-based approach to mine spatio-temporally adjacent risk patterns from population-aware risk trajectories. Then, DGeye builds the novel causality network among risk patterns for risk pain-point identification, risk source attribution, and online risky state prediction. Experiments on both Beijing and Tianjin cities demonstrate the effectiveness of DGeye in real-life DGT risk management. As a case in point, our report powered by DGeye successfully drove the government to lay down gas pipelines for the famous Guijie food street in Beijing.

@article{wang2021dgeye,

title={DGeye: Probabilistic risk perception and prediction for urban dangerous goods management},

author={Wang, Jingyuan and Lin, Xin and Zuo, Yuan and Wu, Junjie},

journal={ACM Transactions on Information Systems (TOIS)},

volume={39},

number={3},

pages={1--30},

year={2021},

publisher={ACM New York, NY}

}